Emotion Recognition

Introduction

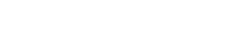

Emotion Recognition (ER) service recognizes emotions based on the user's expressions, voice, and typed inputs.

The ER service's recognition of human emotions enables natural verbal and nonverbal communication and allows the human to interact with a machine.

ER service by LG AI Platform supports the following features:

| Feature | Description |

|---|---|

| Various input data |

Uses audio, image, and text as input data |

| Various emotion recognition |

Determines seven emotions (anger, disgust, fear, happiness, sadness, surprise, neutral). |

| Perception |

Recognizes the user's emotions in two ways.

|

| Server Communication |

Connects to the server using the HTTP/2 method based on transport layer security (TLS) for enhanced security. |

Structure

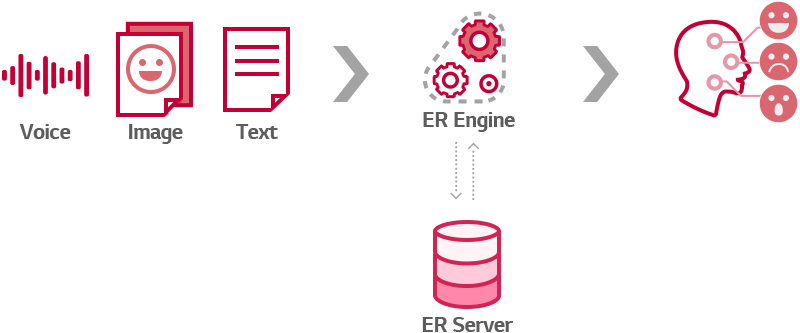

All functions of ER service are run on a server. ER service takes text, visual, and audio data as input and generates appropriate responses.

Examples of Use

ER service is used a lot in everyday life.

- Friendship with AI robots

Allows humans and robots to interact and communicate with each other emotionally.

- Chatbot service that recognizes users' emotions

Allows customer issues to be escalated quickly through real-time monitoring of the chatbot service. Through recognizing customer emotions and complaints, businesses can take action so that agents, not the chatbot, can respond.